Research

Spanning many areas and involving various application domains, my research interests are primarily in computer graphics (in its broad sense) and related fields (such as GPU computing, domain-specific languages, and machine learning).

One fading focus of my work has been on procedural modeling, where I strive to extend what can be (practically) modeled and to improve the ease of the modeling task. Formerly, an emphasis of my research was on interactive and real-time rendering, which often involved making creative and effective use of the (graphics) hardware and APIs.

Selected Past Projects

-

Procedural Modeling2014Grammar-Based Procedural ModelingNext-Gen Language

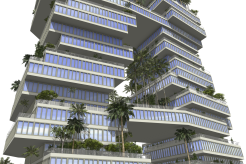

While procedural modeling techniques are a powerful tool for creating digital architectural content, existing grammar-based approaches suffer from several fundamental limitations that preclude the realization of many modeling tasks. In particular, grammar rules can only encode purely local refinement decisions for a single shape with generally no knowledge about other shapes, which severely hinders context-sensitive designs. With CGA++, we developed a novel grammar language that significantly increases the expressiveness, substantially widening the spectrum of what can be realized. One key element is exposing the shapes worked on and the shape tree induced by the ongoing derivation process as first-class citizens in the grammar. This enables referencing and querying other shapes, performing operations involving multiple shapes (such as Boolean operations), and spawning new embeddable shape trees (including via rewriting existing subtrees). Another pivotal cornerstone is allowing coordination and interaction among multiple shapes with the introduction of events. These serve as synchronization points and feature a general, functional formulation, amenable to composition and reuse, where a set of participating shapes is taken and an according action for each is returned.

-

Procedural Modeling2011/2012Lighting DesignProcedural Exterior Lighting with Complex Constraints

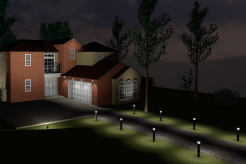

The exterior lighting of buildings significantly contributes to their visual appearance, but modeling light sources such that a reasonable result is obtained often proves difficult and tedious. Facilitating that process, we developed a system which takes a specification of a desired lighting design as input and automatically determines an according lighting solution, comprising luminaires placed in the scene. A design is specified in terms of illumination goals, potential luminaire locations, and constraints. The latter are crucial to imposing aesthetic and structural considerations and may introduce complex interdependencies. To determine a solution satisfying the goals well while meeting all constraints, we employ a stochastic optimization and constraint satisfaction scheme. It directly operates on the complexly shaped, mixed discrete/continuous subspace where all constraints are satisfied and takes the respective actual lighting situation into account when navigating this space, thus keeping the required number of iterations manageable. We integrated our lighting design approach into a grammar-based procedural modeling system, featuring several new (lighting-design-related and general) language extensions. Particularly, the design can be specified procedurally as part of the ordinary modeling workflow.

-

Procedural Modeling2011Proceduralization of DesignsVaried Relayouting of Façades

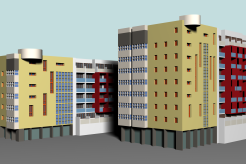

Obtaining a generalized, procedural description of a given design allows adapting it to different sizes and generating multiple variations. Focusing on façades, we developed a framework for proceduralizing an input layout and creating new, varied instances of it. Our representation comprises a hierarchy of labeled regions and a set of constraints governing their (re)arrangement. The regions are derived from a hierarchical segmentation of the input façade (image), while the constraints capture essential aspects of the layout that should be maintained, such as alignments, occurrence frequencies, or which elements may be placed next to each other. Usually being subjective and requiring higher-level semantic knowledge, both the segmentation and the constraint modeling are done semi-automatically. Given such a procedural design and a target size, new variations observing all constraints are computed using a combination of heuristic search and quadratic programming.

-

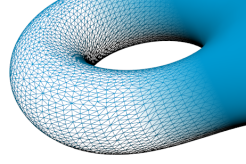

Real-Time RenderingGPU Computing2010VoxelizationData-Parallel Algorithms

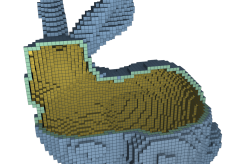

Voxel-based representations are increasingly employed in real-time applications. Addressing their efficient dynamic generation, we developed several data-parallel GPU-based voxelization algorithms that (unlike previous solutions) both yield accurate results and achieve real-time performance. They adopt a software rasterization approach and utilize a new triangle/box overlap test. Supporting binary, conservative (26-separating) and thin (6-separating) surface as well as solid voxelizations, the algorithms cover a wide range of use cases. Typically, they operate on a full voxel grid, but we also introduced direct (solid) voxelization into a memory-saving, sparse, octree-based representation, where uniform volume regions are efficiently encoded.

-

Real-Time Rendering2007/2008Real-Time Soft ShadowsHigher Accuracy with Occlusion Bitmasks

Shadows are crucial for a realistic appearance and provide important visual cues. Aiming at providing high quality in real time, with occlusion bitmasks, we presented a new approach for visibility determination that, by explicitly tracking light sample points, robustly enables correct occluder fusion and directly supports multiple depth layers and multi-colored light sources. Adopting shadow-map-based occluder backprojection, we introduced in-shader rasterization of backprojected micro-occluders into an occlusion bitmask, complemented by new occluder approximations derived from a shadow map as well as new acceleration structures for concentrating computational efforts on relevant occluders. Additionally, we devised a visibility filtering approach for efficient multisample (antialiasing) support and a scheme for smoothly varying shadow quality to locally adapt rendering costs.

Details: “Bitmask soft shadows”, “Microquads revisited”, “Quality scalability”, and our book.

-

Real-Time RenderingGPU Computing2008Curved Surface RenderingCompute-Based Adaptive Tessellation

Visually smooth surfaces are often modeled with curved surface primitives. Targeting their direct rendering in an efficient and accurate manner via adaptive tessellation (and predating dedicated tessellation support in graphics APIs), we devised the flexible, compute-based CudaTess framework, which readily supports large collections of surfaces. It operates in a patch-parallel way and performs all major tasks completely on the GPU, including dynamically determining surface sample points and creating the tessellation topology. Furthermore, it pays special attention to deriving consistent tessellation factors and allows for efficiently evaluating the surface, supporting fast forward differencing schemes for parametric surfaces.

-

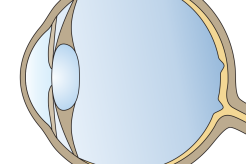

PerceptionReal-Time Rendering2006–2008Visual SensitivityExploiting Limitations and Predicting Artifact Perceptibility

Displayed graphics ultimately being viewed by persons, taking human visual perception into account can be worthwhile for many algorithms and applications. Rendering efficiency may be improved by exploiting the visual system's limited sensitivity to avoid spending effort on producing detail that remains imperceptible to the viewer. Within the interdisciplinary EU project CROSSMOD, I contributed to an according interactive perceptual rendering pipeline that leverages sensitivity-reducing effects (such as visual masking) on a scene level, quantified via real-time threshold maps, to guide the selection of an appropriate geometric level of detail. When switching between different representations, visual popping artifacts may occur that impact the perceived degree of realism. To estimate the perceptibility of such artifacts, I devised a perceptually-motivated predictor, which includes a spatio-velocity color vision model.

-

GPU Computing2005Medical ImagingGPU-Assisted CT Reconstruction

Many tasks in medical imaging can benefit from the computational power and high memory bandwidth offered by graphics hardware. Addressing the reconstruction process for computed tomography, my (pre-compute-API-era) Diploma thesis presents highly optimized GPGPU solutions for the parallel-beam, fan-beam, and circular cone-beam geometries (including the special case of C-arm systems), which outperform previous GPU-based methods on comparable hardware by up to more than an order of magnitude. For the important helical cone-beam setting, the first GPU-based reconstruction approach is introduced. Additionally, I devised GPGPU implementations of related post-processing tasks, such as edge-preserving filtering and ring artifact reduction.